In the previous post, we built a formal ontology from the Z-Machine specification, used it to drive code generation, and then turned it into a test oracle. At the end of that work, I hinted that the next step might be to put an LLM directly in the loop and watch it actually play one of those games. That’s what this post does.

It’s actually fairly complicated to provide a fully working LLM-backed example that someone can use on their own, at least when you want it to be free for everyone. What this post does is try to provide you that, however it does require some lift on your part to get it set up and working locally.

Even if you don’t want to work with the project I’ll show here, I will try to cover the details here broadly enough that you can understand what I’m talking about, including screenshots of what you would see. It’s worth noting that there is no coding in this post at all.

The Context

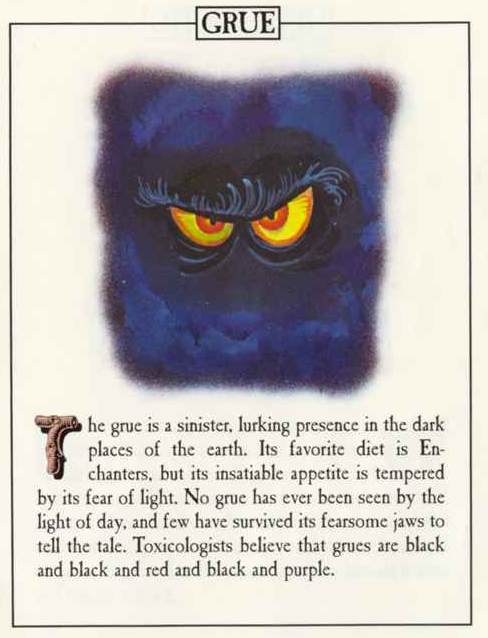

The project I’ll use here I’ve called Grue Whisperer. It’s a browser-based application that lets you play Zork I, II, and III using natural language instead of exact parser commands. The name comes from the horse whisperer tradition: you don’t command a wild or opaque thing, you speak its language. In Zork, the grue is the iconic monster.

It’s a monster that lurks in the dark and kills you if you blunder into it. A whisperer doesn’t blunder. They approach with understanding. Applied here, you’re not typing rigid commands at a 1980s parser anymore. You’re conversing with the dark.

There’s a small inversion buried in the name worth noting: in Zork, the grue is what you fear. The whisperer framing implies you’ve flipped that dynamic. You’re the one who understands the dark now, not the one threatened by it.

The reason this project even exists as part of this blog series is to specifically pose a question the previous posts didn’t address: what does it mean to test software when an LLM is the interface layer? We’ll get to that question, but first let’s take a look at what the text adventure limitations broadly are and why or whether an LLM would have any relevance to those.

The Challenge with Parser-Based Interactive Fiction

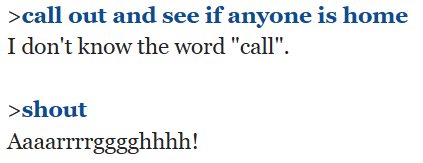

Text adventures, or interactive fiction, work through a parser: a command interpreter that accepts a narrow vocabulary of verb-noun phrases and advances the game state accordingly. TAKE LAMP. GO NORTH. EXAMINE DOOR. The parser matches your input against a list of known commands and responds if it finds a match. If it doesn’t, you get “I don’t understand that” or the slightly more generous “I don’t know the word [whatever].” The interaction model is fundamentally a vocabulary test as much as it is a game.

Infocom understood this and pushed the parser further than almost anyone else. Their parser was famously the best of its era: it could handle multi-noun sentences, prepositions, pronouns, and a surprising range of synonyms. But even the best 1980s parser has hard edges. The vocabulary is finite, the syntax is constrained, and when you step outside either, the illusion breaks. The game stops feeling like a world and starts feeling like a program that doesn’t understand you. That’s not a failure of craft. It’s a structural property of the medium.

The second limitation is subtler and has nothing to do with the parser at all. It has to do with what the author could put in. The prose in these games is often genuinely good, but it was written under real constraints: disk space, memory, and the practical reality that an author can’t anticipate every combination of player action and game state and write atmospheric prose for all of them. A room description gets written once. A response to an unrecognized command gets a generic fallback. The game is as rich as the author could make it, within the limits of a system that had no mechanism for filling in what wasn’t there.

This is exactly where an LLM can (possibly!) add something without taking anything away. The game’s output is still ground truth. The mechanics are still the author’s. The story doesn’t change. But a bare “Nobody’s home.” can become a moment: the hollow echo, the stillness, the sense that this place has been empty for a long time. The LLM isn’t rewriting the fiction. It’s doing what a good narrator does with stage directions: bringing atmosphere to what the text establishes as fact. Grue Whisperer is built around that idea.

Note the “possibly” above. That’s deliberate. After all, that is what would have to be tested. So, for purposes of this post, let’s say your development team has provided a working solution and you want to consider how to engage with that solution and what the testing landscape might look like.

To get started down that path, we have to look at what the project actually does and how to run it.

How It Works

The Z-Machine Layer

The games in Grue Whisperer run entirely in your browser. This is partly possible because Infocom built the Z-Machine in the late 1970s as a portable virtual machine: the same compiled story file could run on dozens of incompatible home computers by shipping a thin interpreter for each platform. The game data itself has never changed.

Grue Whisperer uses my Voxam project, which is a JavaScript Z-Machine interpreter. When a game starts, Voxam loads the story file, runs it until it waits for input, and returns the opening text. From that point on, every command passed to the Z-Machine advances the story and returns a response. This happens synchronously in the browser. There’s no server, no game state stored remotely. The interpreter is entirely client-side.

The LLM Layer

Classic text adventure parsers are brittle. PUT LAMP IN CASE works. LEAVE THE LANTERN WITH THE OTHER TREASURES does not. Grue Whisperer layers an LLM between the player and the parser using Tambo, which handles conversation history, streaming responses, and tool orchestration.

The flow for each player message is:

- The player types a message in natural language.

- Tambo sends the message to the LLM along with the conversation history.

- The LLM calls the sendGameCommand tool (provided as part of Grue Whisperer) with one or more parser commands that match the player’s intent.

- The tool executes each command against the Z-Machine running in the browser and returns the game’s response text.

- The LLM presents the result to the player, rewriting any parser errors as in-world moments rather than exposing them.

The tool boundary is worth dwelling on. The LLM never directly controls the Z-Machine. It calls a tool. The tool executes a command. The game returns text. The LLM narrates. These are four distinct steps and the separation matters: the LLM cannot invent game outcomes. It can only receive what the Z-Machine actually returned and present it.

This distinction will matter quite a bit when I show you how to run the original Zork games and how to run them with an LLM interface.

The Two Prompts

All model behavior is controlled from a single file: prompts.ts. There are two prompts and they operate at different levels.

The systemPrompt governs the entire session. It establishes the model’s identity as an in-world narrator, instructs it to call the tool for every player message without exception, defines how to handle parser errors (rewrite them as narrative, never break the fourth wall), and tells it to decompose compound requests into sequential tool calls. A message like “go north then grab the lamp and turn it on” should produce three separate tool calls, not one.

The commandDescription is scoped narrowly to the sendGameCommand tool itself. It tells the model when to call the tool, how to format command strings, and that it must never invent game output that is inconsistent with what the world model allows. Some instructions appear in both prompts, and that’s deliberate. Repetition across the system prompt and the tool description reinforces the behaviors that have to hold consistently.

Both prompts are plain strings. Changing how the model behaves (how much flavor text it adds, how literally it interprets input, how it handles errors) is a matter of editing those strings.

As a tester, those are your controllability hooks. That’s a key component of the testability for this kind of tooling.

Getting Started

Prerequisites

If you want to try this out for yourself, you’ll need Node.js 22 or later and a Tambo account with an API key. Setting up this account and getting an API key is entirely free.

Installation

You can grab the repo and get everything set up, if you want to play along:

git clone https://github.com/jeffnyman/grue-whisperer cd grue-whisperer npm ci

Tambo Project Setup

Log in to tambo.co and create a new project. In the project settings, you’re going to want to enable Allow system prompt override. This is what allows Grue Whisperer to pass its own system prompt and establish the narrator identity at session start. Without it, the model will behave generically rather than as an in-world narrator.

In Tambo, API keys are generated per project so when you create a new project, you’ll want to generate an API key and then copy that key for the next step.

Configuration

cp .env.example .env

Open .env and set your key:

VITE_TAMBO_API_KEY=your_key_here

Tambo has a limit to how many messages you can send in a free tier project, but it’s currently 500, which is more than enough to play around with this project.

If you find yourself text adventuring a lot and hitting the limit, you could always just create a new project, get the new API key from that new project, and use that in your .env file.

Running

This project uses Vite so you can just run the dev instance:

npm run dev

Your browser should open automatically but if it doesn’t, just go to http://localhost:5173. You could select a game and start playing but let’s talk about what “start playing” means because what I’m really saying is “start testing.”

The Games in Action

Before looking at the LLM-assisted experience, it’s worth establishing what the raw parser expects. I put up some examples of the original games, which will also run in your browser.

Those examples show the classic interaction style for each of the three Zorks: the kind of input that worked in 1980 and still works today if you talk to the parser on its own terms.

Zork I: The Great Underground Empire

The description you get when you start the game is, in part, “You are standing in an open field west of a white house, with a boarded front door.” Let’s try a simple interaction.

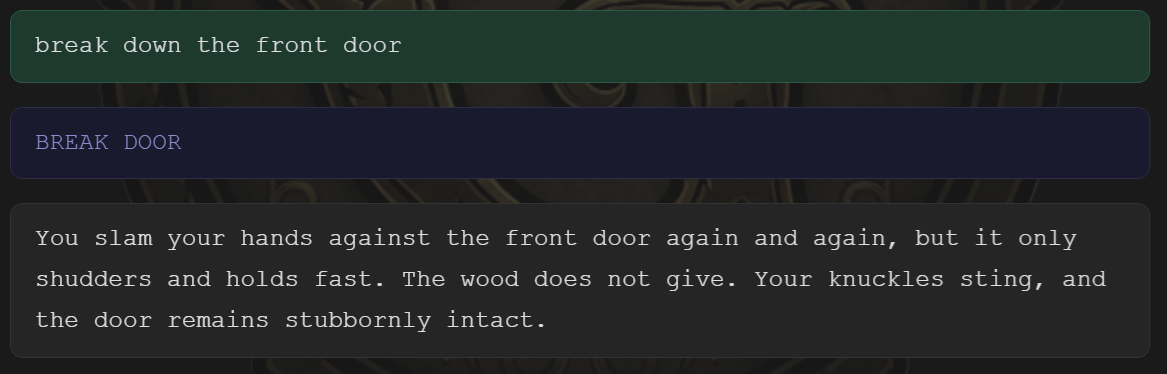

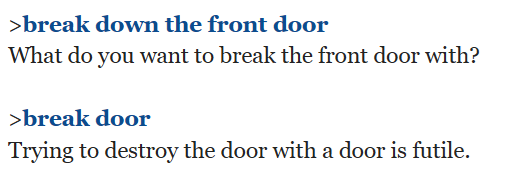

Here I’ve turned on the actual command being generated (with the “Show game commands” toggle at the top). So the command I entered is in green, the command passed to Voxam is in blue, and the game output is below both.

Notice the difference in the classic version.

What you see in the classic version is what the game is authored to provide with those commands. I’m showing you both the command I tried in Grue Whisperer as well as the command that Grue Whisperer generated.

What you see in the Grue Whisperer version is the model trying to add some atmosphere.

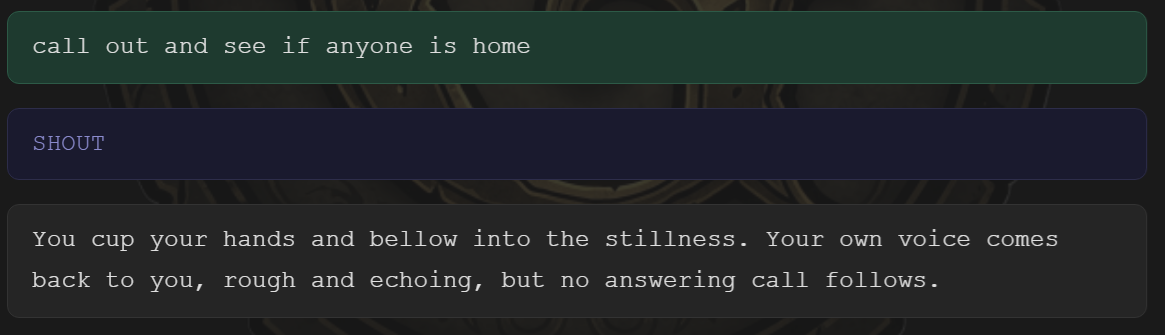

Let’s try another example:

Here is how that would look in the classic version (again, both with the command I actually tried and the one that was generated):

I trust you can see what the model is doing that differs from the original.

Engaging the Game

Now, I could sit here and show you screenshots all day to compare but, instead, let me show you a few initial moves based on dialogue I had with the game in the Grue Whisperer version. Here is the start of another session (and notice the non-determinism creeping right in with the first command):

> break down the front door

You set your sights on the front door, preparing to force it open.

Your fists clench and you steel yourself, but then you realize—you’ll need something to actually break down the front door with. What do you want to use?

> what am I carrying?

You check your inventory for anything that might be useful.

You are empty-handed. There isn’t anything in your possession that you could use to break down the front door.

> check out the mailbox

You turn your attention to the mailbox, wondering if there’s anything useful inside.

The small mailbox stands before you, closed and waiting.

> check out what’s inside the mailbox

You open the small mailbox to see what might be hidden inside.

You open the small mailbox. Inside, you find a leaflet waiting for discovery.

> what does the leaflet say?

You take the leaflet and read its message:

"WELCOME TO ZORK!

ZORK is a game of adventure, danger, and low cunning. In it you will explore some of the most amazing territory ever seen by mortals. No computer should be without one!"

> The leaflet suggests I'm in a computer game?

The words on the leaflet fill you with a strange sense of awareness—as though the world you stand in is part of some grand adventure, crafted to test your wits and daring. Yet your surroundings are as tangible as ever, the scent of earth, the feel of the cool breeze, and the soft weight of the leaflet in your hand. Here, mysteries await, no matter what the leaflet might suggest.

> This may sound odd, but can I get into the mailbox?

You contemplate the mailbox with a curious thought: could you possibly squeeze inside?

You try to fit yourself into the mailbox, but all you accomplish is bumping your head against the small metal door. Clearly, it’s not meant for people—just leaflets.

> I tried to break down the door but maybe I should just try opening it?

You turn back to the front door, opting for a gentler approach. You reach out and try opening it.

You grasp the handle and give the front door a try, but it refuses to open. Whatever secrets lie behind it will require another way in.

> Maybe I should walk around the house and see if there’s another way in?

You decide to search for another way inside and begin walking around the house.

You make your way to the north side of the white house. There’s no door here, and every window you see is nailed shut with thick boards. To the north, a narrow path disappears into the trees. It seems you’ll need to keep exploring for another entrance—or maybe follow that path.

Not too terrible, right? Try to do any of that in the original classic version and you’ll see how limited the text parsers were and are. Now, notice what I try next:

> Let's kept searching around the house to find a way in.

You continue your search, moving around the house to see what else you might discover.

You circle to the west side of the house and find yourself back where you started, near the small mailbox. No new entrances here—perhaps the east or south sides will offer something different.

Perhaps the south side of the house will reveal another way in. Would you like to continue your search there or try something else?

A key thing here to notice (although you have to know Zork a bit is that that it didn’t glom onto the one open window that exists in a location called Behind House. (You can get to it by going “northeast” and then “southeast” from the starting location.) Since the model asked a question there, I respond to it:

> Yes, let’s check out all sides of the house and see if we can find a way in.

You make your way around to the next side of the house, determined to inspect every angle for a possible way inside.

On the south side of the house, you find no doors—only more windows, each one boarded tight. You’ve circled most of the house, but still no easy entrance presents itself. Perhaps there’s another side to check, or maybe something else nearby will offer a solution.

You find yourself behind the white house. At last, a glimmer of opportunity—there’s a small window, slightly ajar, set in the corner of the house. Perhaps this is the secret way in that you’ve been searching for. A path also leads into the forest to the east, if your curiosity should guide you further afield.

Notice it’s not quite getting the narrative as cohesive as it could in terms of searching the house. Put another way, why didn’t it find the “behind the white house” the first time I tried? Why did it happen the second time?

The reasoning shows that the model generated “north side –> west side” as a plausible search path, but didn’t complete the circuit because it probabilistically generated a prompt asking what I would like to do next once immediate success wasn’t achieved. The second time, with renewed explicit intent (“check out all sides”), the model’s sampling led it to complete the circuit and discover the window behind the house.

With this, you can see the causality model that Grue Whisperer is using for its context. The AI isn’t “remembering” that it failed to check behind the house. It’s generating each response fresh, weighted by context, but not deterministically bound to complete systematic actions.

This shows a critical design dimension for this model: causal consistency under (seemingly) equivalent prompts. The model can yield different navigational results for semantically similar requests, indicating that state management is emergent rather than explicit. Here “searching around the house” and “check out all sides of the house” triggered different reasoning weights.

What this means is that narrative coherence depends, to some extent, on prompt specificity. (Which, granted, is an issue with any parser.) The model’s “world” doesn’t solely exist as probable next-tokens given context. Which is great, but it does only exist as a partially persistent state.

Text adventures are often used in causal AI training, and one of the main goals is to demonstrate whether the system can appear to “understand” the game world while actually doing nothing more than reconstructing it probabilistically on each turn. If the latter is true, it eventually leads to inconsistencies that traditional game engines (barring design bugs) wouldn’t produce. This is where context window exhaustion would set in.

So, now I continue a bit:

> I should probably look in the window before I decide to go in there.

You approach the small window and peer inside to see what might be waiting beyond.

Peering through the slightly open window, you spot what appears to be a kitchen beyond. The room inside seems quiet and still—perhaps this is your chance to slip inside unnoticed.

> Let's see if we can get inside the window but let’s not make a lot of noise.

Carefully, you attempt to ease through the slightly ajar window, doing your best not to make a sound.

You reach for the small window, only to realize it’s actually closed. You’ll need to open it before you can slip inside.

You’ve now quietly opened the window. Would you like to climb inside into the kitchen? The way is clear.

Now here, let’s focus on my command: “Let’s see if we can get inside the window but let’s not make a lot of noise.” Notice the wording: “Let’s see if we can get inside …” I didn’t say “enter the window” or anything like that. So, now consider again the output we got for that command above.

The reasoning shows that the AI demonstrated uncertain commitment: it started executing (paragraph 1; “Carefully, you attempt…”), then backtracked to state-checking (paragraph 2; “You reach for … it’s actually closed”), then partially completed (paragraph 3; “quietly opened the window”) without finishing the original goal: entering the window. The model assigned weights to different interpretations:

- W(feasibility_check) = 0.4

- W(execute_action) = 0.6

- W(complete_full_intent) = 0.3

The model leaned toward execution but with low confidence, leading to partial commitment: it opened the window but didn’t complete entry, essentially hedging its bets. Why? Well, that’s the interesting part: the phrase “Let’s see if we can” contains two competing interpretations. One is literal/semantic: request for feasibility assessment (“Is this possible?”) The other is pragmatic/idiomatic: tentative action attempt (“Let’s try doing this”).

When I applied some tuning to the model, the weights changed like this:

- W(feasibility_check) = 0.1

- W(execute_action) = 0.9

- W(complete_full_intent) = 0.85

But is that good? Again, this is where good commands (just like good prompting) comes in. I didn’t say “enter the window” unambiguously. I said “let’s see if we can enter the window.”

So, as a developer of this model, if I “fix” the clumsy narration by increasing action completion weights, I risk the model becoming presumptuous about player intent. If I “fix” the presumption by increasing inquiry weights, I risk making every tentative command feel like talking to an overly literal genie.

This is fundamentally a classification problem with asymmetric costs. A false positive would be to act when the player meant to inquire. This is potentially game-breaking and harms player agency. A false negative would be to inquire when the player meant to act. That would be annoying and would likely break immersion.

So even this exploratory round of testing (because that is what I’ve been doing here) suggests that the optimal solution likely involves dynamic weight adjustment based on action stakes, combined with teaching players that ambiguous phrasing yields ambiguous results, which is pretty much what text adventures do already, just in a more mechanical way.

Notice that, as a game author, none the core dynamics or mechanics of my game (in this case, Zork) would change. The window would still need to be opened before the player can go through it. But I can entirely modulate the kind of experience the player has which, arguably, is just an expansion of what it means to be an author of this kind of game experience.

The other thing to notice here is a quality assessment based on the exploratory testing: I’m basically treating the parser like I would a dungeon master in a tabletop game. I’m stating declaratively what I want to think about doing.

Following that last question, I was asked by the model (“You’ve now quietly opened the window. Would you like to climb inside into the kitchen?”), I respond accordingly:

> Yes, let's go into the kitchen.

You slip through the now open window and enter the kitchen.

And then I get a description of the kitchen. It’s undeniable that there is a valid experience that can be had here. Again, imagine if this was how people played text adventures back in the day, similar to playing a Dungeons & Dragons campaign, where you speak your broad intentions. To show that, consider what I did next:

> Let's listen and see if we hear anything. I’m worried someone else might be here.

You hold still and listen carefully, straining to catch any sound that might betray another presence in the house.

The kitchen is still and quiet. If you wish, you can focus your listening on something specific—the staircase, the passage, or the window, for example. Which would you like to listen to more closely?

Now, this is interesting. The reasoning shows that the model is pattern-matching classic text-adventure conventions rather than simulating realistic listening. The model has learned this pattern from its training data and is prompting me to be more specific because that’s how these games typically work, even though my command (“listen and see if we hear anything”) is perfectly reasonable for detecting general ambient sounds or presences. The model conflates two distinct reasoning systems. One is narrative realism (“If someone else is here, I would hear general sounds: footsteps, breathing, movement”) and the other is game mechanics (“LISTEN is a verb that requires a noun object in the parser”).

This is pretty crucial. The model is trying to be helpful by guiding me toward valid game syntax, but in doing so, it’s actually breaking the causal realism that is operative. My concern (“someone else might be here”) would manifest as ambient cues rather than object-specific investigations. What that shows is that the model is genre-aware but may be overfitting to mechanical conventions at the expense of causal coherence. This is a large problem in causal AI at the moment.

What Has Exploratory Testing Showed Me?

Well, some people absolutely do not like this non-determinism and feel that it makes the game harder to play. Others feel that it adds quite a bit of flavor to the game. Yet others agree that it adds flavor but worries that it might introduce elements, as part of that flavor, that are not in the game itself.

All of this is to say that tolerances differ and quality is (largely) subjective. Even more specifically, quality is a shifting perception of value over time. That shifting perception modulates and attenuates with technology changes, of which democratized AI is still finding its place. I don’t know what the end result will be. Personally, as a part-time educator who sees his students using AI to compose their essays and do their thinking for them, I’m more than a bit worried about how AI will continue the trend of humans abdicating their ability to create interesting things to technology. But as a way for humans to engage with interesting things that other humans have created, I do think AI has a place.

Yet, if you were testing this for your company, it’s worth considering your strategy here. You have the original Zork experience and you have the experience your company is providing with an LLM-backed Zork experience. It’s worth really thinking about how you would test and what quality assessments you would make.

All Grue Whisperer does is put an LLM in front of a particular experience (in this case, a text adventure). This is no different, conceptually, than how many companies are deciding to incorporate LLMs into their products and exposing them to users. So the thinking of how to test this game context is not all that far off from any non-game context.

Where (and Whether) DeepEval Fits In

The previous posts in this Z-Machine sub-series had a relatively clean story about testing: build an ontology, derive expectations from it, run a parser against real story files, compare. Pass or fail, warn or not, the oracle was explicit and the comparison was mechanical.

Grue Whisperer doesn’t fit that model. What does it mean for this to work correctly? The Z-Machine either accepts a command or it doesn’t. But the LLM’s job isn’t just to issue commands. It’s to translate intent, narrate results, and absorb failures gracefully. That’s a harder thing to measure.

This is where a tool like DeepEval becomes relevant in this context, and where it gets interesting to think about, even before writing a single test. DeepEval is an LLM evaluation framework that provides metrics for evaluating the quality of model outputs: answer relevancy, faithfulness, hallucination, contextual precision, and others. If you’ve been following this series you’ll have seen it in earlier posts. Here, I’m not going to walk through setup or show working test code. What I want to do is think through which of those metrics actually map onto this problem and where the mapping gets complicated.

What You’d Want to Measure

There are at least three distinct behaviors worth evaluating in Grue Whisperer:

Command fidelity. Did the LLM issue a command that the Z-Machine accepted? This is the most mechanical check and the closest to what we did with the parser tests. A command that produces a parser error (“I don’t understand that”) means the LLM’s translation failed. DeepEval’s faithfulness metric is a reasonable proxy here if you treat the game’s accepted command vocabulary as the “retrieval context” and ask: Did the model stay within it?

Intent preservation. Did the command the LLM chose actually reflect what the player asked for? A player who says “find something to light the way” probably wants the lamp, not a random LOOK command. The answer relevancy metric, which measures how well a response addresses the input, could probe this if you frame the player’s natural language message as the question and the issued command as the answer.

Hallucination. Did the narrator invent outcomes that the game didn’t produce? This is the most critical failure mode. The system prompt is explicit: the LLM must never contradict the game’s text. DeepEval’s hallucination metric is directly applicable here, in that you would compare the model’s narrated response against the game’s actual returned text and check whether anything was fabricated.

Where It Gets Complicated

The tool boundary creates a measurement challenge that’s easy to miss until you’re building the tests. The LLM’s response to the player is composed from two things: the game’s output (ground truth, returned by the tool) and the narrator’s embellishment (the LLM’s contribution). DeepEval metrics need to know which is which to evaluate faithfully. You can’t just feed the full narrated response into a hallucination check without also providing the raw game text as the reference. Building a test harness that captures both (the tool’s return value and the final narrated output) is a non-trivial wiring problem before you even get to writing metrics.

There’s also the question of what counts as a correct multi-step response. If a player says “go north then grab the lamp,” the LLM should make two separate tool calls. An evaluation of the final output can’t easily verify that the calls were made in the right order, or that they were made at all rather than inferred. You would need to inspect the tool call trace, not just the narrated result.

This is actually a familiar shape from the previous posts: automated checking can confirm some things cleanly and others only approximately, and the honest approach is to be precise about which is which rather than inflating confidence by calling uncertain results a pass.

None of this means DeepEval isn’t useful here. It means the evaluation design requires the same care that went into the ontology-driven tests: define what you’re measuring, choose a metric that actually measures it, and be explicit about what the metric can’t see. Hallucination is checkable. Intent preservation is approximate. Command fidelity requires the tool trace, not just the output. Knowing which is which before you start writing tests saves a lot of expensive discovery later.

And Then There’s Subjective Quality

The hard part (“does any given response feel immersive?”) is subjective and DeepEval’s standard metrics don’t apply well because there’s no ground truth.

The deeper quality question (“did the invented narrative actually keep the player immersed?”) really does come down to human judgment. The best you could do there is a small panel of prompts you play-test manually whenever the system prompt changes, treating it like a regression checklist rather than an automated test.

What This Demonstrates

Grue Whisperer is a small project, but the testing question it poses is not small. The previous posts in this series were about using AI to build and validate an artifact: a parser, grounded against a formal specification, testable against real story files. This project inverts that. The AI is not the thing being tested. It’s the interface layer. And the question of whether that interface layer works correctly is partly technical, partly qualitative, and not fully answerable by automation alone.

That’s not a defect in the approach. It’s an honest description of what LLM-powered interfaces introduce into a testing practice. The Z-Machine is still deterministic. The parser still either accepts a command or it doesn’t. But the layer in between (the translation, the narration, the error absorption) lives in probability space. DeepEval gives you tools to sample that space systematically. The work is in knowing what to sample and what the samples actually tell you.

Next Steps!

This mini-digression into showing an LLM-backed application allowed me to approach testing from a slightly different angle, including whether and to what extent to consider test tooling. This, however, sets the stage for some DeepEval metrics that I have not considered in previous posts. I’ll start the process of looking at those metrics but, before that, the next post will take a dive into some societal aspects.